The Core Problem: Complexity Has Outpaced the Tools

The complexity of modern product portfolios and multi-tier supply chains has outpaced what traditional EHS and sustainability tools can handle. Companies are now being asked product-specific, substance-level questions by customers, regulators, and investors. Most lack the integrated data infrastructure to answer them.

This is not a niche problem. Enterprise manufacturers managing millions of products and tens of thousands of chemical substances cannot generate reliable life cycle data, Scope 3 emissions figures, or audit-ready compliance records using spreadsheets, disconnected ERP systems, or manual research. The volume and precision required make human-scale processes structurally unworkable.

AI is entering this space not because it is fashionable, but because there is no other path to scale.

What Is Actually Working vs. What Is Still Hype

The panel was direct on this. AI in EHS and sustainability is generating real value today in specific, well-scoped use cases, and falling short where the underlying data foundation is missing.

What’s working today:

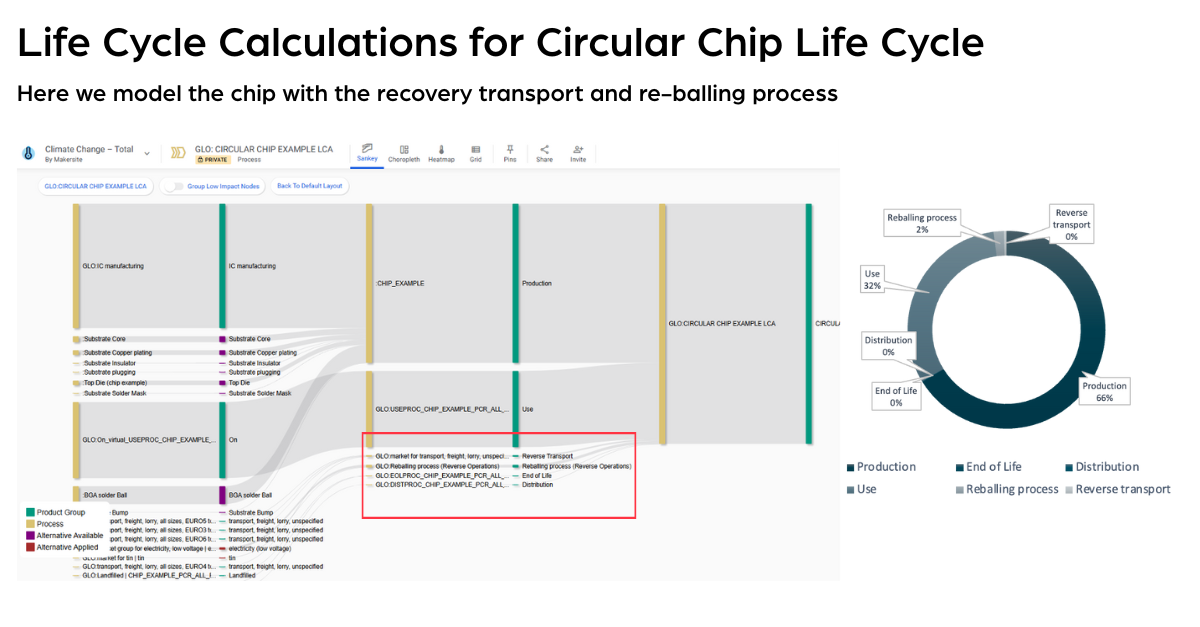

Automated LCA and PCF generation at product and configuration level, where AI processes full material declarations, maps substance-level data to background databases, and generates traceable life cycle inventories without manual modeling effort. AI-assisted chemical data modeling for substances where no emission factors or LCI datasets exist, using synthesis and pathway data to fill gaps rather than defaulting to averages or proxies. Continuous compliance monitoring against expanding regulatory frameworks, where AI matches BOM-level data to substance watchlists in real time. Scope 3 supply chain mapping across multi-tier supplier networks, surfacing hotspots and prioritizing data collection where it matters most.

Still more promise than reality:

Fully autonomous sustainability decision-making without expert validation. AI cannot produce ISO-compliant outputs without human oversight of methodology and data quality. Generic large language model deployments without deep sustainability domain training, the specificity of EHS methodology, LCA system boundaries, and substance-level compliance cannot be approximated by general-purpose models. And AI layered on top of structurally broken data processes will only create fragmented, siloed, unvalidated inputs produce unreliable outputs regardless of the model.

The consistent finding: AI works when it is applied to specific, high-impact use cases on a structured data foundation. It does not work as a substitute for that foundation.

The Real Bottleneck Is Data Readiness, Not Model Capability

One of the most technically substantive discussions in the keynote focused on where enterprise organizations actually get stuck. Not in AI capability, but in data readiness.

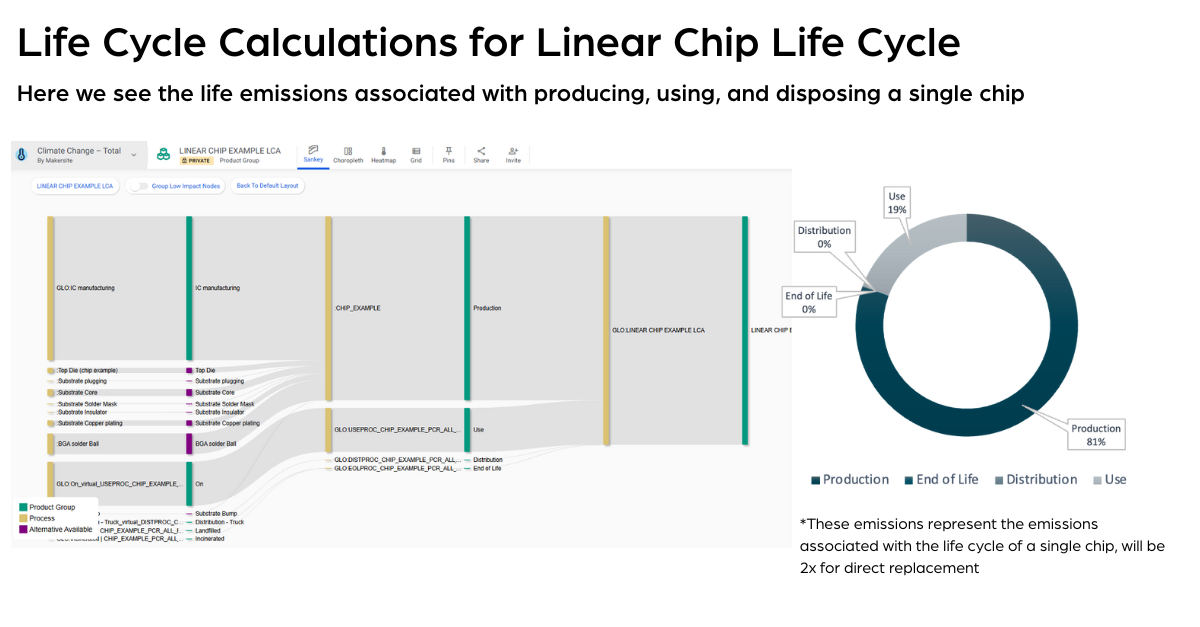

Consider what it takes to generate a product carbon footprint for a manufacturer with a complex chemical portfolio. Measured LCI datasets and emission factors exist for only a fraction of the substances involved. The remainder must be modeled from synthesis pathways, process data, or representative chemical categories. For a single product, dozens of custom LCA datasets may need to be generated from hundreds of candidate substances. Across a portfolio of millions of products, this is a multi-year data engineering challenge.

The approach that works is incremental and methodologically rigorous.

- Map existing coverage first. Identify what background database coverage already exists and where manufacturer-provided LCA data can be matched exactly. This scopes the true gap before any modeling begins.

- Prioritize by impact. Focus custom dataset generation on substances with the greatest frequency and material contribution across the portfolio. Starting with the most-used materials delivers meaningful coverage without attempting to solve everything at once.

- Model the long tail by category. Remaining substances can be grouped into chemical categories, solvent classes, inorganic groups, and represented by datasets with defined variance, min/max ranges, and documented error margins. This is scalable and auditable.

- Handle marginal contributors appropriately. Substances that contribute negligible quantities to the final product can be represented using high-level grouped data, such as average organic or inorganic chemical classifications, without materially affecting output accuracy.

- Align on methodology before scaling. ISO compliance requirements, error margin conventions, and how averages are applied in reporting must be agreed between technical and sustainability teams before outputs are used for external disclosure.

This is not a “plug in AI and get answers” workflow. It is a structured, expert-guided process in which AI dramatically accelerates each step. The methodological rigor is still required. Makersite is built to support exactly this kind of layered, scalable approach, from data ingestion and substance-level mapping through to audit-ready LCA and PCF outputs.

How the Sustainability Leader Role Changes in 3 to 5 Years

The panel’s view here was grounded rather than speculative.

The shift is not from human judgment to automated decision-making. It is from reactive reporting to real-time insight generation. Sustainability leaders who today spend significant time on data collection, supplier follow-up, and manual LCA modeling will increasingly function as analysts and strategists, interpreting AI-generated outputs, setting data quality standards, and embedding sustainability criteria directly into product design and procurement decisions.

The implication for organizations is clear: the value of the sustainability function is increasingly determined by the quality of its data infrastructure, not its headcount. Teams that build structured, auditable data pipelines now will have a structural advantage in regulatory readiness and decision speed within the 3 to 5 year window.

Where Scope 3 Is Hardest

Scope 3 emissions, particularly Category 1 purchased goods and services, remain the most difficult area, and the panel was specific about why.

The problem is not the emissions calculation. It is the absence of primary data at the supplier level. Most Scope 3 analyses rely on spend-based or industry-average approaches because supplier-specific, product-level emissions data does not exist in structured, accessible form. AI can model gaps with defined uncertainty, but it cannot compensate for missing primary data.

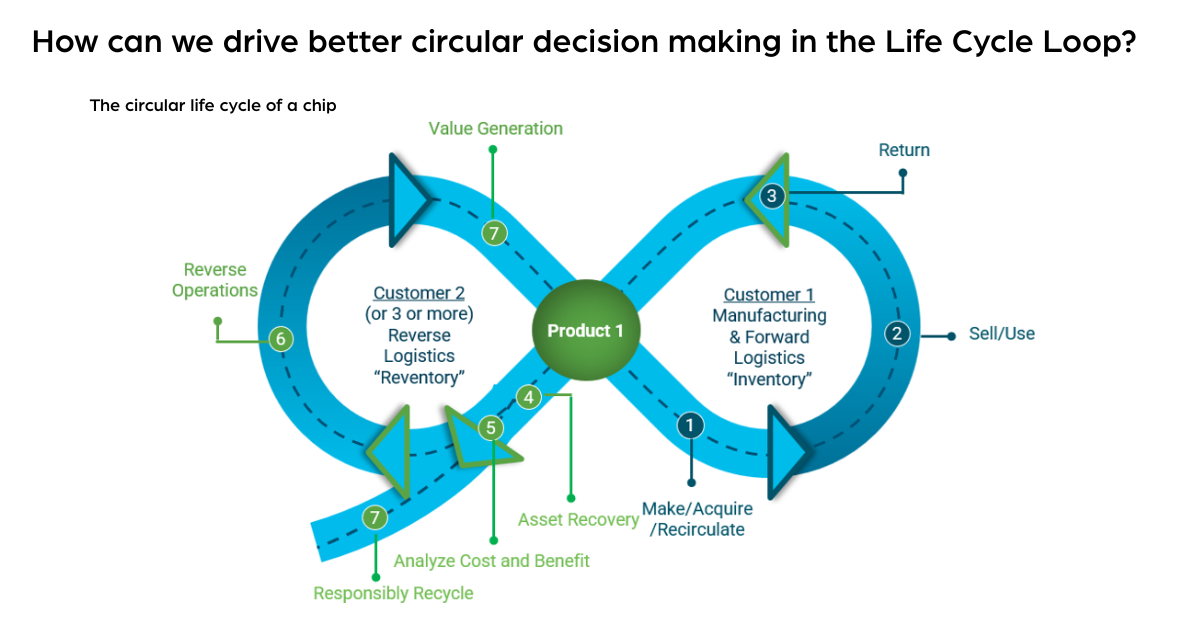

Organizations making the most progress on Scope 3 share three characteristics. They have built structured supplier data collection processes, full material declarations, BOM-level inputs, that feed directly into LCA and PCF workflows. They have invested in component-level modeling that can be reused across product families rather than rebuilt product by product. And they have established methodology alignment across sustainability, engineering, and commercial teams so that AI-generated outputs are trusted and acted upon.

The approach that consistently does not work: attempting to resolve Scope 3 at the portfolio level with aggregate methods while continuing to operate disconnected, product-level data systems.

What an AI-Enabled Sustainability Decision Looks Like at the Design Level

The most forward-looking discussion centered on product design, where the greatest leverage exists.

In the next three to five years, engineers making component selection decisions will have real-time access to sustainability impact data at the substance and configuration level. Selecting a different supplier or substituting a material will immediately surface its LCA, compliance, and Scope 3 implications before the decision is finalized, not weeks later during a sustainability review.

This is technically achievable today for organizations that have built the necessary data infrastructure. The constraint is not AI capability. It is the availability of structured, substance-level product and supplier data in a form that AI can use.

The leadership implication is significant: Sustainability decisions in the next decade will increasingly be made by product engineers, not sustainability teams in isolation. The function of the sustainability team shifts to building and maintaining the data systems, methodological standards, and AI tooling that make those decisions possible at scale.

One non-negotiable: AI-generated sustainability outputs require full audit trails. ISO-aligned PCFs and LCAs need traceable, validated data lineages. Explainability is a technical requirement, not an optional feature.

Watch-Outs as AI Gets Embedded in EHS Workflows

The panel closed with a frank assessment of where AI adoption fails in EHS and sustainability.

Treating AI as a substitute for data quality is the most common mistake. AI can model gaps and generate datasets for missing substances, but it cannot produce defensible outputs from structurally flawed inputs. Organizations that skip data foundation work before deploying AI will generate results that fail audit, regulatory, or customer scrutiny.

Neglecting methodology alignment is the second failure pattern. Different LCA system boundary definitions and allocation approaches can produce materially different results from the same underlying data. If sustainability, engineering, and commercial teams are not aligned on methodology before AI outputs are generated, those outputs will be contested internally before they reach any external stakeholder.

Underestimating the supplier engagement requirement is the third. Scaling sustainability data across complex supply chains is not purely a technology problem. Thousands of suppliers must participate in structured data collection for AI-generated outputs to reflect primary data rather than estimates. That requires change management and supplier enablement, not just software.

And finally: confusing speed with accuracy. AI generates outputs faster. Faster outputs with unquantified uncertainty are not more useful than slower outputs with defined error margins. Speed and methodological precision must be calibrated together.

The One Thing Leaders Should Understand Right Now

Organizations that will use AI effectively for sustainability in 3 to 5 years are the ones building structured data foundations today.

The technology is ready. The bottleneck is data, specifically, the absence of product-level, substance-level, supplier-validated data organized in a way that AI can work with. Progress comes from practical, incremental steps: mapping what data exists, identifying the highest-priority gaps, and systematically closing them through supplier engagement, AI-assisted modeling, and expert validation.

As Manuel noted ahead of NAEM OPEX/TECH26, “Progress tends to come from practical steps that build confidence, not from trying to solve everything at once. It’s an evolution, not a revolution.”

That remains the right starting point.

In Practice: How Lenovo ThinkPad Solved This at Scale

The challenge described throughout this keynote is not theoretical. Lenovo’s ThinkPad team worked through it directly with Makersite.

ThinkPad faced increasing pressure in enterprise procurement bids requiring configuration-specific, ISO-aligned Product Carbon Footprints. A single model-level PCF cannot represent variation across customer configurations. Without configuration-level visibility, ThinkPad could not demonstrate how component choices influenced the final footprint. This was a measurable gap in competitive tenders.

The approach Makersite and Lenovo took maps precisely to the methodology described in this here in this article. Supplier Full Material Declarations were ingested and automatically converted into substance-level LCA models. More than 2.5 million FMDs processed through Makersite. Rather than modeling every product variant independently, ThinkPad shifted to a shared-component approach: SSDs, displays, memory, and chassis were validated once and reused across product families. Makersite then generated the highly granular substance-level LCAs for the parts and assemblies behind each shared component. Work that would have taken years manually.

The outcome: configuration-level, ISO-aligned PCFs generated at scale, certified, and usable in enterprise sales conversations. ThinkPad sellers can now demonstrate how specific component choices move the carbon footprint up or down, using traceable data rather than estimates.

Internally, sustainability, engineering, and commercial teams now work from the same data. That alignment between people, methodology, and system is what makes the outputs usable, not just accurate.

Next Steps

If your organization is navigating product-level sustainability data challenges, whether for LCA, Scope 3, PCF, or compliance, the starting point is understanding where your data stands and where the highest-impact gaps exist. Makersite works with enterprise manufacturers to build and scale that foundation.